Via Shane Dawson on Youtube

In the last few years, conspiracy theories have been spreading like wildfire and seem to pop up everywhere you look. The internet is rife with them, they’ve infiltrated our political lives, and for some even their personal lives. Buying into these theories might feel ridiculous or even humorous, but they lead to a growing disillusionment with our governmental systems and science itself. This is increasingly dangerous, and it’s no coincidence that these theories have been perfectly engineered to appeal to the way our brains work.

Conspiracy theories are defined by Merriam-Webster, as the belief that an event or phenomenon is being controlled or influenced by one or more parties conspiring against the general public, and we are all capable of buying into them under the right conditions. People don’t all believe in conspiracies for the same reasons, but general scientific distrust is a large part of it. Many of us feel let down and suspicious of scientists and scientific advancements. Not entirely unreasonably, since science and medicine aren’t without their own biases, and, especially if you have a history of feeling unheard by doctors, it’s easy to believe that they might have ulterior motives.

The example on all of our minds is the COVID-19 vaccine. Anti-vaccine sentiment had been rising in general, but it reached a fever pitch during the pandemic. According to the Pew Research Center, scientific distrust doubled from 2020 to 2021. Theories relating to COVID-19 were inescapable and, from the outside looking in, it often felt ridiculous that they could be believed. But the truth is, anyone can fall prey to conspiratorial thinking. In a lot of cases a general lack of education and understanding of how scientific advancements work makes distinguishing facts from fiction harder, but this gets exacerbated when people are being educated by vastly different, often inaccurate sources. Scientific communication has a very big problem when it comes to spreading information to the general public. Scientists are trained to use cautious, complex language to be as exact as possible, but that type of writing and information is hard to grasp and inaccessible for many people. People buy into the information that fits into their cognitive biases, and that often isn’t coming from actual experts.

As we all know, the internet is designed to keep us online as long as possible, and the algorithm is tailor-made to keep us hooked. They want to make money off of you, and to do that they need your attention. So, when their content is science or news-focused, they aren’t really trying to teach you things, but rather trying to get the most clicks. This translates into exaggerations, clickbait titles, and AI photos. The internet is ripe, almost rotten, with content that appeals to what we call salience bias. Cognitive biases exist because we make shortcuts so we can focus our limited perceptive and cognitive resources on the things that feel most important. Salience bias, in particular, means we focus on outliers because they stand out from other sources. Our survival mechanisms have been built around the fact that striking visual or emotional stimuli need more of our attention. It allows our brain to filter out irrelevant stimuli and make split-second decisions for our survival. However, in the case of conspiracy theories and algorithms, it means we remember the most attention-grabbing information rather than the most accurate.

Furthermore, we get put into algorithmic bubbles that show us repeated content that fits into what we already believe. This is great for getting edits about your favorite show, but when it comes to conspiracies, it gets dangerous. Confirmation bias makes us more likely to seekout, recall, and believe information that confirms the beliefs we already hold. We developed this bias because, as humans, we have a need for self-esteem and a stable sense of self, and questioning your worldview makes everything feel off-kilter. However, it also makes us more likely to believe a theory if it already aligns with our worldview. Furthermore, people are much much more likely to ignore a lack of evidence if it’s something they want to believe. For example, if you already believe that the government hides things from us, it isn’t that big of a leap to believe that they’re hiding the existence of aliens from the general public, despite a lack of proof. And when you’re shown increasingly more radical things aligned with your belief system, you can get pulled into believing things that are increasingly removed from reality. This is part of the reason why political extremism, which goes hand in hand with conspiratorial thinking, has grown exponentially through social media.

Algorithms work by showing you more of things you’ve interacted with; the moment you’re flagged as being interested in a certain type of content, you will be shown more of it. You might not believe something farfetched the first time you see it, but if it keeps popping up, you might start, even unconsciously, giving it more credibility. This is called the Illusory Truth effect, hearing or seeing something repeatedly makes it feel familiar and, eventually, feel true.

Humans also have a habit of overestimating how intentional actions or events are. We call this fundamental attribution error. We want to believe that things are rational by design and motivated, whereas science is usually anything but that. Science is often muddled, unsure and constantly evolving based on new research and studies. While that’s what makes science fascinating, it’s also what makes it hard for people to get on board with it.

“Uncertainty scares us, makes us feel existential, and overall reduces our capacity to keep going with daily life.”

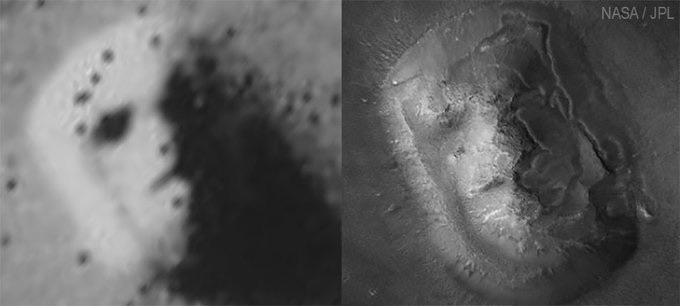

To combat this, we attribute meaning to things that may not have any. For example, NASA took a photo of Mars in 1976, in which a particular mesa appeared to resemble a face. While we later took photos that proved this was a geographic formation like any other, it was too late. People had given meaning to the face on Mars, and theories about a secret civilization on the planet were, and still are, being spread.

Via NASA

Belief in conspiracies is also heavily influenced by cultural attitude towards governments. For example, the US, a country built on individualism, has historically been very hesitant to let the government have a say in their lives. This makes them statistically more likely to buy into beliefs about their politicians, scientists or governments being secretly controlled by some “other”. Rising extremism appeals to our tendency to view the world as a conflict of us vs them, and when that thinking is further encouraged by politicians, it grows out of control. Belief in science has become part of a political ideology rather than an acceptance of facts. This is in part because of motivated reasoning. If a person you hold in high regard, like a politician, says something is true, you want to believe them over other people. When that high regard is something more extreme like fanaticism, people start taking their word as law and ignoring all other sources of truth, especially if their fanaticism becomes a part of their identity. For example, fans of celebrities who have built their identity around being a fan of this person will often ignore, deny, and even attack critiques or differing opinions about said celebrity.

The problem with conspiratorial thinking is that debunking it legitimizes and gives the theory more power. You’ll often see conspiracy theorists using debunks as “proof” of their theory being covered up. So what can we even do? The best way to combat this wave of misinformation and just plainly untrue theories is to find more effective ways of spreading facts by appealing to our cognitive biases. While this may sound counter-intuitive, fighting fire with fire might be our best way forward. Content vetted by experts, but tailored to appeal to the algorithm and our brains, might be the most effective way to combat conspiratorial thinking. Of course we’ll never be able to fully stamp it out, but it’s clear that we at least need to cut back on these weeds, unless we want to see the entire internet overrun.

Leave a comment